We are at risk of turning into the machines we create. Iain McGilchrist

How can we interact with machines without thinking like a machine? Without moving like a machine?

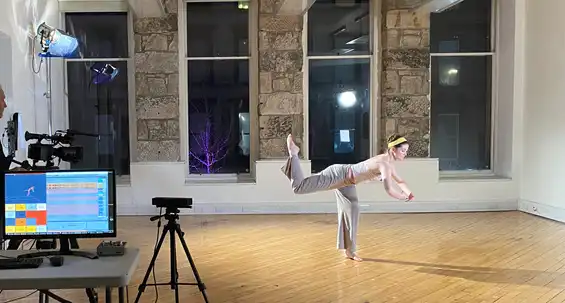

The Wilds is ongoing artistic research begun in 2020 exploring how AI can unlock new forms of interaction between humans and machines. The goal is to let humans remain wild humans rather than being domesticated into machine operators. I use unsupervised AI to analyse human dance, then generate movement-based interfaces.

The AI doesn't have our common preconceptions about what a body should do when it interacts with a computer. Instead, it creates something wild and unpredictable by trying to make full use of the full range of movement of the body.

See below for interactive installations, an interactive dance performance, texts and audio-visual works.

Concept

There are three critical focuses:

-

Embodiment. Work with our non-rational intelligence. Embrace the full complexity of the moving body without classifying it into ‘gestures’ or other reductive abstractions. Being with instead of using the interface. Play and resonance over the manipulation of virtual realms.

-

Emergence (Non-designed Interaction). Let interaction between human and machine emerge from their existing affordances and ways of being. Build tools for expression that aren't infused with the preconceptions of their designers. Embrace not knowing.

-

Extreme Individualisation. Remember, a perfectly unbiased AI is one that eliminates individual differences. What happens if we go for maximal bias and each have our own AI? Avoid appropriating the creativity of others by training on myself. AI that is all of me rather than a tiny bit of everyone.

The Wilds rejects inevitablism, instead approaching AI as an opportunity to detangle our minds from the hyper-rationalism of our technological epoch.

Points 1 and 2 are critical of general tendancies of interactive technologies to marginalise the human body, and to funnel human expression into predefined forms (posts, selfies, etc). My manifesto Against Interaction Design articulates this in more detail.

Here, AI is a beacon of hope. Works like Sonified Body speculate on more human-friendly forms of human-computer interaction rooted in embodiment, resonance and feelings of belonging.

Point 3 is critical of AI itself: its tendancy to appropriate human creativity and to seduce us into homogenous forms of expression. Self Absorbed addresses this by refocusing AI's extractive capabilities entirely onto myself.

Interactive Works

Audio-visual Works

Texts

Against Interaction Design

“The more interaction is designed, the more human agency becomes constrained to fit within the models conceived by the designer.” A short manifesto that distils a position that’s emerged through a decade of creating interactive art.

reference

T. Murray-Browne, “Against Interaction Design,” Leonardo 58(3):316-318, 2025. [arXiv:2210.06467]

bibtex

@article{murray2025against-interaction-design,

title={Against Interaction Design},

author={Murray-Browne, Tim},

journal={Leonardo},

volume={58},

number={3},

pages={316--318},

year={2025},

publisher={MIT Press One Rogers Street, Cambridge, MA 02142-1209, USA}

}

Emergent Interfaces: Vague, Complex, Bespoke and Embodied Interaction between Humans and Computers

Academic paper detailing the philosophical underpinning of my project The Wilds. Human-computer interaction is currently dominated by a paradigm where abstract representations are manipulated. How can we instead build interfaces out of our capacity for emergence and resonance?

reference

T. Murray-Browne and P. Tigas, “Emergent Interfaces: Vague, Complex, Bespoke and Embodied Interaction between Humans and Computers,” Applied Sciences, 11(18): 8531, 2021.

abstract

Abstract

Most human–computer interfaces are built on the paradigm of manipulating abstract representations. This can be limiting when computers are used in artistic performance or as mediators of social connection, where we rely on qualities of embodied thinking: intuition, context, resonance, ambiguity and fluidity. We explore an alternative approach to designing interaction that we call the emergent interface: interaction leveraging unsupervised machine learning to replace designed abstractions with contextually derived emergent representations. The approach offers opportunities to create interfaces bespoke to a single individual, to continually evolve and adapt the interface in line with that individual’s needs and affordances, and to bridge more deeply with the complex and imprecise interaction that defines much of our non-digital communication. We explore this approach through artistic research rooted in music, dance and AI with the partially emergent system Sonified Body. The system maps the moving body into sound using an emergent representation of the body derived from a corpus of improvised movement from the first author. We explore this system in a residency with three dancers. We reflect on the broader implications and challenges of this alternative way of thinking about interaction, and how far it may help users avoid being limited by the assumptions of a system’s designer.

bibtex

@article{murraybrowne2021emergent-interfaces,

author = {Murray-Browne, Tim and Tigas, Panagiotis},

journal = {Applied Sciences},

number = {8531},

title = {Emergent Interfaces: Vague, Complex, Bespoke and Embodied Interaction between Humans and Computers},

volume = {11},

year = {2021}

}

Authored and Unauthored Content: A Newly Necessary Distinction

Authored and unauthored media have always been here. But AI makes them indistinguishable.

Latent Mappings: Generating Open-Ended Expressive Mappings Using Variational Autoencoders

A short technical paper for the NIME conference describing how AI is used in Sonified Body to interpret human movement to control live sound.

reference

T. Murray-Browne and P. Tigas, “Latent Mappings: Generating Open-Ended Expressive Mappings Using Variational Autoencoders,” in Proceedings of the International Conference on New Interfaces for Musical Expression (NIME), online, 2021.

abstract

Abstract

In many contexts, creating mappings for gestural interactions can form part of an artistic process. Creators seeking a mapping that is expressive, novel, and affords them a sense of authorship may not know how to program it up in a signal processing patch. Tools like Wekinator [1] and MIMIC [2] allow creators to use supervised machine learning to learn mappings from example input/output pairings. However, a creator may know a good mapping when they encounter it yet start with little sense of what the inputs or outputs should be. We call this an open-ended mapping process. Addressing this need, we introduce the latent mapping, which leverages the latent space of an unsupervised machine learning algorithm such as a Variational Autoencoder trained on a corpus of unlabelled gestural data from the creator. We illustrate it with Sonified Body, a system mapping full-body movement to sound which we explore in a residency with three dancers.

bibtex

@inproceedings{murray-browne2021latent-mappings,

author = {Murray-Browne, Tim and Tigas, Panagiotis},

booktitle = {International Conference on New Interfaces for Musical Expression},

day = {29},

doi = {10.21428/92fbeb44.9d4bcd4b},

month = {4},

note = {\url{https://doi.org/10.21428/92fbeb44.9d4bcd4b}},

title = {Latent Mappings: Generating Open-Ended Expressive Mappings Using Variational Autoencoders},

url = {https://doi.org/10.21428/92fbeb44.9d4bcd4b},

year = {2021},

}

Acknowledgements

This project kicked off through Sonified Body, a collaboration with artist and AI researcher Panagiotis Tigas which was funded by Creative Scotland with support from Present Futures festival and the Centre for Contemporary Art, Glasgow, Feral and Preverbal.

Sonified Body developed through residencies with dancers Adilso Machado, Catriona Robertson and Divine Tasinda, film-maker Alan Paterson and mentored by Ghislaine Boddington.

Self Absorbed developed with support from the Milieux Institute, Concordia University, Montreal.